Think about the last ten visitors to your online store. You probably picture ten people browsing on their phones or laptops. But in 2026, the reality is quite different. Five of those “visitors” weren’t people at all—they were bots.

With automated agents now generating 51% of all web traffic, the role of the website crawler has shifted from a simple search indexer to a complex ecosystem of digital buyers, AI trainees, and unfortunately, malicious scrapers. For e-commerce brands, this isn’t just a technical update; it’s a new way of doing business. It requires a strategy that welcomes the helpful agents while firmly blocking the 37% of traffic trying to exploit your data.

Key Takeaways:

- The Post-Human Shift: Automated traffic now dominates the web (51%), fundamentally changing how products are discovered and inventory is managed.

- The Three Categories: Bot traffic is no longer uniform; it is split into Good (Search), Grey (AI Training/Fetchers), and Bad (Malicious Scrapers).

- The “Zero-Click” Reality: AI “Answer Engines” are reducing traditional referral clicks, making “Answer Engine Optimization” (AEO) a critical new discipline.

- Infrastructure Strategy: Blocking Googlebot is no longer a viable option. Optimization now requires distinct strategies for Training Bots (ingesting data) versus User-Action Fetchers (checking prices).

- Security vs. Visibility: Success relies on building “Agent-Ready” infrastructure (via Schema and Server-Side Rendering) combined with rigorous behavioral security to stop inventory hoarding.

The New Reality: The “Post-Human” Web

The foundational assumption of e-commerce—that your traffic consists primarily of humans browsing your digital storefront—is no longer valid. Over the past year, we crossed a historic threshold: 51% of all internet traffic is now automated. The “Post-Human Web” is here.

For e-commerce managers, this isn’t just a metric to ignore; it’s a fundamental change in how your infrastructure costs and marketing data must be managed.

The “Invisible Audience” and the Dark Funnel

The most dangerous side effect of this shift is the creation of an “Invisible Audience.” Traditional analytics platforms like Google Analytics 4 (GA4) rely on client-side JavaScript to track user sessions. However, the vast majority of modern AI fetchers (bots that look up info for users) do not execute marketing tags to conserve their own computing power.

This creates a massive “Dark Funnel.” An AI agent might visit your site 1,000 times to verify pricing or availability for potential buyers, but your analytics dashboard will show zero sessions. The only evidence of this activity resides in your server logs.

- The Measurement Gap: Current predictions indicate that traditional search volume will drop by 25% by 2026 as users shift to chatbots. If you aren’t tracking these bot interactions, you are losing visibility into a quarter of your potential market.

- The Financial Toll: This “invisible” traffic isn’t free. U.S. publishers are estimated to spend over $240 million annually on unwanted bandwidth costs driven solely by AI crawlers.

Anatomy of a Bot: How Website Crawlers Work

To manage this traffic, you must understand the mechanism. A modern crawler (or “spider”) follows a specific lifecycle. Understanding this cycle is the only way to ensure your products are visible to the “Good” bots while blocking the “Bad” ones.

Phase 1: Discovery (The Map)

Before a bot can buy or index, it must find you.

- Links: The traditional method—following links from other known pages.

- Sitemaps: The XML roadmap you provide.

- IndexNow: Unlike the old “pull” method where bots visited on their own schedule, IndexNow allows your store to “push” updates instantly. Major platforms like Shopify and Amazon have integrated this to alert Bing and Yandex the moment a product goes out of stock, ensuring AI agents don’t recommend unavailable items.

Phase 2: Rendering (The “Soft 404” Trap)

This is where 2025 has brought the biggest technical change.

- The Challenge: Modern e-commerce sites rely heavily on JavaScript (React, Vue) to show content.

- The Update: In December 2025, Google updated its documentation with a critical warning: Pages with non-200 HTTP status codes might skip rendering entirely.

- What this means: If your server sends a “404 Not Found” header, but you rely on JavaScript to display a “We’re sorry, this item is out of stock” message or a list of related products, Googlebot may never see it. It stops at the header.

- The Fix: You must ensure that critical directives are handled server-side. Do not rely on client-side JavaScript to “fix” technical SEO issues.

Phase 3: The “Attention Span” (Crawl Budget)

Every bot has a limit. “Crawl Budget” is the number of pages a bot is willing to visit on your site before leaving.

- The Facet Trap: E-commerce sites are notorious for wasting this budget on “Faceted Navigation”—endless combinations of filters (e.g., /shoes/red/size-10/velcro). To a bot, every combination looks like a new page.

- The Solution: If you have millions of filter combinations, you are likely trapping the crawler in a loop, preventing it from indexing your new high-margin products. Strict robots.txt rules are required to focus the bot’s attention where it generates revenue.

The Taxonomy of Traffic: Good, Bad, and Grey

To effectively manage the “Post-Human Web,” we must stop treating all bots as a single category. In 2026, automated traffic has fractured into three distinct species. Each requires a different strategy: one you feed, one you negotiate with, and one you block.

The Good: Search & Social (4.5% of Traffic)

While AI makes headlines, Googlebot remains the dominant economic force, driving nearly 90% of traditional search referrals.

- The Dual Mandate: In 2026, Googlebot is no longer just indexing for blue links. It is simultaneously harvesting data to “ground” AI Overviews. It is now recognized as the highest volume AI training crawler on the web.

- The Mobile vs. Desktop Gap: While Google is mobile-first, Bingbot often retains a desktop-first indexing model. If your site uses “adaptive serving” (showing less content on mobile to save load time), you might be inadvertently hiding data from Bing’s AI copilot.

The Grey: AI Training vs. Fetching

This is the most disruptive category. “Grey bots” aren’t trying to crash your site, but they often take more than they give. We must distinguish between two types:

- The “Vacuum Cleaners” (Training Bots): Bots like GPTBot, ClaudeBot, and Bytespider ingest massive amounts of data to train future models. Their ROI for merchants is often negative. Some datasets show large LLM bots performing up to 25,000 crawls for every single referral click. They consume bandwidth but rarely send shoppers.

- The “Agents” (User-Action Fetchers): These are high-value bots (e.g., ChatGPT-User). They visit specific pages in real-time because a human asked, “How much are the Sony XM5 headphones?” Blocking these agents means blocking a potential sale.

The Bad: The Malicious Majority (37%)

Malicious bots now account for 37% of all internet traffic.

- Simple Bots (The GenAI Wave): Surprisingly, “dumb” scripts have surged to 45% of bad bot traffic. Why? Generative AI coding tools allow anyone to write a scraping script in seconds, flooding sites with low-quality attacks.

- Advanced Persistent Bots: These use residential proxies (hijacked home IP addresses) to mimic human behavior, making them nearly impossible to block with standard firewalls. They are responsible for Account Takeovers (ATO) and inventory hoarding (“Grinch Bots”).

Best 12 Strategies for Managing Website Crawlers

Managing this ecosystem requires moving from “Defense” to “Orchestration.” Here are the 12 essential strategies for 2026.

1. Audit Your “Invisible Audience”

Stop relying solely on Google Analytics. GA4 only tracks visitors who execute JavaScript. Most AI agents do not.

- Action: Perform a monthly Log Analysis. Look for User-Agents like GPTBot or OAI-SearchBot in your server access logs. Compare this volume to your actual referral traffic to calculate the real cost of serving these bots.

2. Master the robots.txt (But Know Its Limits)

The robots.txt file is a “gentleman’s agreement,” not a security wall. Legitimate bots respect it; malicious ones ignore it.

- Action: Use specific directives. Instead of a blanket User-agent: *, explicitly allow the high-value agents (User-agent: ChatGPT-User) while potentially disallowing the heavy training scrapers if your server load is too high.

3. Implement “Agent-Ready” Schema

If your site is an API for bots, Schema.org is the documentation. In 2025, Google’s “Merchant Listings” update made structured data critical infrastructure.

- Action: Ensure every product page has valid MerchantReturnPolicy markup. Google now extracts return windows and fees directly from this code to display in search results. If your schema is broken, you disappear from the rich shopping grid.

4. Adopt the IndexNow Protocol

Waiting for Google to crawl you is so 2020. IndexNow is a “Push” protocol adopted by Bing, Yandex, and major platforms like Shopify.

- Action: Configure IndexNow to instantly notify search engines when a product goes out of stock. This prevents AI agents from recommending products you can’t sell, reducing customer frustration and bounce rates.

5. Enforce Server-Side Rendering (SSR) for Price & Stock

Bing and many smaller AI agents struggle to render complex client-side JavaScript.

- Action: Ensure that critical decision data—Price, Availability, and Product Title—is rendered on the server (in the raw HTML). If a bot has to wait 3 seconds for a React script to load the price, it will often time out and move to a competitor.

6. Differentiate Training vs. Fetching

Not all AI bots are equal.

- Action: Consider a “Nuanced Blocking” strategy. You might choose to block GPTBot (the training scraper) to save bandwidth, while whitelisting ChatGPT-User (the live agent) to capture sales. Check your CDN (e.g., Cloudflare) for “One-Click” AI controls that allow this granularity.

7. Harden the API Against Logic Attacks

Bad bots don’t hack your server; they abuse your logic. 44% of advanced bot attacks now target APIs.

- Action: Place strict rate limits on your “Add to Cart” and “Login” API endpoints. A human can’t add 50 items to a cart in one second—if an IP address does this, block it immediately.

8. Use Behavioral Fingerprinting

Since bad bots now use residential IPs (looking like real home users), IP blocking is ineffective.

- Action: Use security tools that analyze behavior (mouse movements, battery usage APIs, TLS fingerprints). Bots move in straight lines and don’t have “battery drain”; humans do.

9. Deploy data-nosnippet Strategically

You want AI to cite you, but you also want the click.

- Action: Use the data-nosnippet HTML tag on your unique, proprietary content (like deep-dive guides or expert reviews). This allows Google to index the page but prevents it from displaying your hard work directly in the AI summary, forcing the user to click through to read it.

10. Manage Faceted Navigation (The Budget Killer)

Infinite filter combinations (Red > Size 10 > Velcro > Under $50) create “Spider Traps” that waste crawl budget.

- Action: Use the canonical tag to point all filter variations back to the main category page, or use robots.txt to disallow crawling of URL parameters (e.g., Disallow: /*?sort=).

11. Monitor Your “Crawl-to-Refer” Ratio

Treat bots like paid acquisition channels. Is the “Cost per Crawl” worth it?

- Action: If a specific bot (e.g., Bytespider) is hitting your site 1 million times a month but sending zero traffic, it is a parasite. Block it.

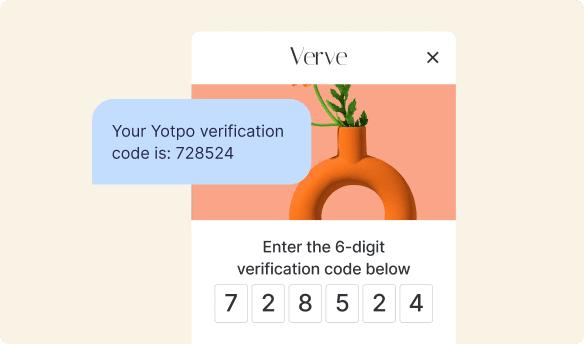

12. Secure Your Loyalty Program

Account Takeover (ATO) attacks have increased by 40% as bots try to drain loyalty points.

- Action: Implement multi-factor authentication (MFA) or CAPTCHA specifically on the “Redeem Points” action. Protecting your customers’ earned value is critical for retention.

Answer Engine Optimization (AEO): Feeding the New Crawlers

As we move deeper into 2026, the goal of a crawler has shifted. It is no longer just looking for keywords to rank a list of blue links; it is looking for facts to construct a direct answer. This gives rise to a new discipline: Answer Engine Optimization (AEO).

The “Trust Deficit” in AI Search

While AI Overviews and chatbots are convenient, they have a credibility problem. Recent data reveals that 53% of consumers distrust AI-powered search results, citing concerns over hallucinations and bias. Yet, in a paradoxical twist, 51% of consumers still use them for initial product discovery.

This creates a unique challenge for e-commerce brands: You must be visible to the AI (so you get found), but you must provide human validation (so you get trusted).

Strategies for “Generative Engine Optimization” (GEO)

To win in this environment, your content strategy must evolve from “SEO” to “AEO.”

- Prioritize “Information Gain”: AI models reward content that provides new information, not just repeated consensus. Instead of generic product descriptions (“Great leather boots”), publish dense, factual specifications (“Full-grain leather, 1.5-inch heel, water-resistant coating tested for 4 hours”).

- The “Q&A” Format: Structure your content the way users ask questions. An FAQ section on a product page is no longer just for support; it is a “snippet farm” for AI agents.

- Bad: “Shipping Policy.”

- Good: “How long does shipping take to New York?” (Direct question = Direct answer).

- Zero-Click Optimization: Acknowledge that for some queries (e.g., “Is this gluten-free?”), the user may never click your link. Ensure your Brand Name is prominent in the answer itself so you build mental availability even without the traffic.

How Yotpo Helps: Fresh Content for the AI Era

This is where the “Post-Human Web” reconnects with the human element. AI agents are voracious consumers of data, but they struggle with freshness and nuance. They cannot “experience” a product; they can only read about it.

Reviews as the “Freshness Signal”

User-Generated Content (UGC) is the perfect fuel for AI crawlers. When a customer leaves a review saying, “These running shoes helped my plantar fasciitis,” they are creating a semantic link between your product and a specific medical condition—a link that your marketing team likely never wrote.

- Semantic Richness: Yotpo’s Smart Prompts are designed to capture these high-value topics. By using AI to ask buyers specific questions (e.g., “How was the fit?” or “Did the color match the photo?”), you generate 4x more mentions of the specific details that AI search engines look for.

- The “Trust” Bridge: As Amit Bachbut, an e-commerce expert, notes regarding the 2026 landscape:

“Trust is the new currency, and that trust is built one review at a time. Shoppers in 2026 are inherently skeptical; they use the authentic voice of other customers to validate every single purchase. Your ability to collect and showcase that social proof is no longer a feature—it’s your entire foundation.”

The Metric That Matters

Ultimately, feeding the bots is only useful if it converts the humans. Data shows that shoppers who interact with this content convert 161% higher than those who don’t. By ensuring your reviews are indexable and marked up with the correct Schema, you turn your customer feedback into a permanent, evolving asset that “trains” the search engines to recommend your brand first.

Essential Tools to Try

Managing the “Post-Human Web” requires a specific stack. You cannot rely on marketing analytics alone; you need infrastructure tools that see the raw traffic before it hits your site. Here are the essential categories for 2026.

1. The “Health Monitors” (Free & Essential)

- Google Search Console (GSC): Your primary dashboard. In late 2025, GSC added specific reports for “Merchant Listings” and “Product Snippets,” alerting you if your return policy or shipping data is missing from the AI Knowledge Graph.

- Bing Webmaster Tools: Often overlooked, but critical for the IndexNow protocol. Use this to configure instant indexing and verify that your server-side rendering is working for their “desktop-first” bot.

2. Log Analysis (Finding the Invisible Audience)

Since 51% of traffic doesn’t execute JavaScript, it’s invisible to GA4. You need a log analyzer.

- Screaming Frog Log File Analyser: A staple for technical SEOs. It ingests your raw server logs to visualize exactly which bots (Googlebot, GPTBot, ClaudeBot) are hitting which pages and how often they encounter errors.

3. Bot Management & Security (The Gatekeepers)

You need a firewall that understands behavior, not just IP addresses.

- Cloudflare: Beyond their CDN, Cloudflare’s “Bot Fight Mode” and new AI controls allow you to toggle specific scrapers on or off with a single click. New reports highlight their ability to identify “Grey” bots that mimic human behavior.

- Imperva: The source of the definitive “Bad Bot Report,” Imperva specializes in mitigating sophisticated “Grinch Bots” that hoard inventory during high-traffic drops.

4. Schema Validation

- Schema.org Validator: Before you deploy code, test it here. A single missing comma in your JSON-LD can prevent an AI agent from reading your price, rendering you invisible to the high-intent fetchers.

The Future: Agentic Commerce & The Legal Battlefield

As we look toward 2030, the “Website Crawler” is evolving into a “Digital Shopper.” Projections indicate that Agentic Commerce—where AI agents make purchases on behalf of humans—could generate $1 trillion in U.S. retail revenue by 2030.

The Pivot: From Blocking to Enabling

In late 2025, we saw a massive strategic pivot from Amazon. Initially, the retail giant blocked AI crawlers to protect its pricing data. However, realizing that users were shifting to AI assistants for shopping, Amazon began hiring for “agentic commerce partnerships.”

- The Lesson: If you block the agents entirely, you block the customers. The future isn’t about building a firewall; it’s about building an API for the “Zero-Interface” shopper.

The “Pay-to-Crawl” Economy

We are entering an era where data access is transactional. Companies like TollBit are now brokering deals where AI companies pay publishers for a license to crawl their content.

- Implication for Merchants: Large e-commerce aggregators may soon be able to charge OpenAI or Anthropic for a real-time feed of their inventory, turning their product catalog into a revenue stream itself.

The Legal Showdown: Google vs. SerpApi

The rules of engagement are being rewritten in court. In late 2025, Google filed a lawsuit against SerpApi, a company that scrapes search results to resell the data.

- The Stakes: If Google wins, it sets a precedent that data on the public web is not free for the taking if security measures are bypassed. This could empower merchants to sue competitors who aggressively scrape their pricing, potentially ending the “wild west” era of price undercutting.

Conclusion

The “Website Crawler” of 2026 is a Janus-faced entity. One face represents your ultimate customer—a tireless, intelligent AI agent that can match your products to user needs with perfect accuracy. The other face represents an existential threat—a resource-draining, data-stealing automaton that can hollow out your inventory and skew your analytics.

For e-commerce leaders, the era of passive coexistence with crawlers is over. Success now requires a dual strategy: actively nurturing the helpful agents with structured data and server-side rendering, while ruthlessly filtering the malicious actors using behavioral fingerprinting. The digital storefront of the future is not just for people; it is for the machines that serve them.

Frequently Asked Questions

What is the difference between a crawler and a scraper?

A crawler (or spider) typically visits a website to index content for search engines (like Googlebot) or to feed AI models. A scraper is a bot designed to extract specific data—such as prices, inventory levels, or customer reviews—often for competitive intelligence or unauthorized resale. While crawlers generally respect robots.txt rules, malicious scrapers do not.

Should I block GPTBot?

It depends on your strategy. GPTBot is primarily a training crawler used to build OpenAI’s models. If you block it, your content may not be included in future knowledge bases, potentially hurting long-term brand awareness. However, GPTBot is known to be aggressive with bandwidth. A balanced approach is to block the training bot (GPTBot) if server costs are high, but whitelist the user-agent fetcher (ChatGPT-User) to ensure you don’t miss live sales queries.

How do I know if bots are slowing down my site?

Check your server logs for a spike in 5xx errors or high latency times during specific windows. If you see thousands of requests from a single IP range or user-agent (like Bytespider) coinciding with a performance drop, you are likely under a “Denial of Service” effect from aggressive crawling.

Does Googlebot execute JavaScript?

Yes, but with caveats. In late 2025, Google clarified that if a page returns a non-200 status code (like a 404), it may stop processing and never execute the JavaScript. This means if you rely on React or Vue to render “Out of Stock” messages or “Related Products” on an error page, Googlebot might never see them.

How does “Agentic Commerce” change my CRO strategy?

Conversion Rate Optimization (CRO) has traditionally focused on visual urgency (countdown timers, red buttons) to influence human psychology. Agentic Commerce shifts this to API speed and data accuracy. An AI agent buying on behalf of a user doesn’t care about your hero image; it cares about the JSON-LD payload. If your page takes 3 seconds to load because of third-party tracking scripts, the agent will time out and buy from a faster competitor. In 2026, speed is a transaction requirement, not just a ranking factor.

Can I legally block all scrapers?

The legal ground is shifting. Historically, the HiQ vs. LinkedIn case suggested that scraping public data was legal. However, the late 2025 lawsuit, Google vs. SerpApi, challenges this. Google argues that scraping becomes illegal when it circumvents security measures (like CAPTCHAs) to harvest data. While the courts decide, your best defense is technical (behavioral blocking) rather than legal (Terms of Service), as malicious actors rarely respect the law.

What is the “Dark Funnel” of AI traffic?

The “Dark Funnel” refers to the massive volume of product interactions that happen inside an LLM (Large Language Model) but never register in your analytics. Because AI fetchers often don’t execute client-side JavaScript (where Google Analytics tags live), a bot could recommend your product to 5,000 users, and your dashboard would show zero sessions. This leads to a systematic underestimation of your brand’s reach and the ROI of your content.

How do “Grinch Bots” actually work?

“Grinch Bots” (inventory hoarding bots) abuse the business logic of a shopping cart. They use automated scripts to add high-demand items to a cart, which temporarily reserves the inventory for a set period (e.g., 15 minutes). By constantly refreshing the cart or cycling the items between bot accounts, they create artificial scarcity, preventing real humans from buying the item so the scalper can resell it on a secondary market.

Why is my “Crawl Budget” dropping?

Crawl budget is often reduced by server performance issues. If Googlebot encounters a high rate of “5xx” (server error) responses or slow response times, it will automatically throttle its crawl rate to prevent crashing your site. Additionally, if you have a “Spider Trap” of infinite faceted navigation URLs (e.g., /color/size/material/price), Google may decide your site has low “value density” and crawl it less frequently.

What is “Generative Engine Optimization” (GEO)?

GEO is the practice of optimizing content specifically for AI Overviews and chatbots. Unlike SEO, which targets a list of links, GEO targets the “single right answer.” This involves structuring content in direct Question-Answer formats, using high-authority citations, and ensuring your brand entity is clearly defined in the Knowledge Graph so the AI can confidently cite you as a source.

How do I protect my pricing strategy from competitor bots?

Competitors use scrapers to undercut your prices dynamically. To protect against this, you should implement rate limiting on your product pages and use honeypot tactics (invisible links that only bots follow) to identify and block scrapers. Additionally, consider serving pricing data via a server-side image or obfuscated code for high-risk SKUs, making it harder for simple scrapers to read.

Will AI agents eventually buy products without human approval?

Yes, this is the end state of Agentic Commerce. We are already seeing the rise of “authorized agents” where a user sets a budget (e.g., “Buy any coffee beans under $20 that are highly rated”). As trust in these agents grows, we will move to a “Zero-Interface” economy where the transaction happens entirely via API between the buyer’s bot and the seller’s bot.

How does “Schema” help with bot management?

Schema (Structured Data) acts as a “map” that reduces the computing cost for a bot to understand your page. Instead of parsing the entire HTML DOM to guess which number is the price, the bot reads the offers:price tag in the JSON-LD. By making your site easier and cheaper to crawl, you encourage deeper indexing by both search engines and shopping agents.

What is the impact of the “Soft 404” update on my catalog?

Google’s 2025 update means that if you serve a “200 OK” status code for a product that is actually out of stock (a “Soft 404”), you are wasting crawl budget. Googlebot has to render the page to realize it’s empty. The best practice is to serve a true 404 or 410 (Gone) HTTP header immediately for permanently removed products, or use 301 redirects to relevant categories, sparing the bot the effort of rendering dead pages.

Join a free demo, personalized to fit your needs

Join a free demo, personalized to fit your needs