You’ve likely felt the shift in your own browsing habits. You ask a question, get an instant, synthesized answer, and move on without ever visiting a website. For e-commerce brands, this behavior is the new baseline. The goalpost has moved from simply being “found” on a list to being “cited” in an answer. This transition to Generative Engine Optimization (GEO) requires more than just keywords—it demands a strategy that convinces an AI your brand is the definitive source of truth. This guide is your blueprint for adapting to that reality.

Key Takeaways

- Optimizing for Synthesis: Success in AI search requires structuring data for “chunking” and direct synthesis, moving beyond simple keyword ranking.

- Bridging the Trust Gap: Brand authority and verifiable citations are now critical leverages to overcome consumer skepticism toward AI-generated results.

- The Inverted Pyramid: Adopting a journalism-style content structure—placing direct answers and definitions upfront—significantly increases your probability of being cited.

- Technical Foundations: Robust technical signals, specifically comprehensive Schema markup, Knowledge Graphs, and detailed Product Feeds, are non-negotiable for AI visibility.

- The Freshness Signal: Fresh User-Generated Content (UGC) provides the constant stream of live data that Large Language Models (LLMs) prioritize over static content.

The New Reality: From Search Engines to “Answer Engines”

To optimize for this new landscape, we first need to understand the machine we are working with. The “black box” of search has evolved. We have moved from a model of retrieval to a model of synthesis.

From Indexing to Retrieval-Augmented Generation (RAG)

Traditional search engines operate on an “Index-Retrieve-Rank” model. A crawler finds your page, indexes the content, and ranks it based on keywords and backlinks. Generative engines add a critical fourth step: Synthesis. This architecture is known as Retrieval-Augmented Generation (RAG).

In a RAG system, a user’s query triggers a semantic search for “chunks” of information rather than whole pages.

- Vector Search: The system converts the user’s prompt into a mathematical vector (a series of numbers representing meaning). It then scans its database for content chunks—paragraphs, data tables, product specs—that have a similar vector.

- Context Assembly: The system pulls the most relevant chunks into a “context window.”

- Generation: The Large Language Model (LLM) reads these chunks and writes a new, original answer, citing the chunks it used as sources.

The Strategic Pivot: You are no longer competing for a page rank; you are competing for “chunk inclusion.” Your content must be modular, highly relevant, and dense with information so that the vector search algorithm selects your specific paragraph as the best possible building block for the answer.

The “Query Fan-Out” Phenomenon

A defining capability of 2026-era models is “Query Fan-Out.” When a user asks a complex question—common in B2B or high-consideration e-commerce journeys—the AI does not run a single search. It breaks the prompt down into component parts.

Consider the query: “What is the best enterprise e-commerce platform for a fashion brand with $50M GMV that integrates with Klaviyo?”

A traditional engine looks for keywords matching that string. An AI engine “fans out” the query into sub-tasks:

- Sub-task 1: Identify enterprise platforms suitable for high GMV.

- Sub-task 2: Filter for fashion industry features (visual merchandising, SKU depth).

- Sub-task 3: Verify integration capabilities.

- Sub-task 4: Compare pricing models.

The AI retrieves information for each sub-task separately and then synthesizes the result. This means a brand could be cited in the final answer even if it doesn’t rank for the main head term, provided it has the authoritative “chunk” for one of the sub-tasks (e.g., a technical documentation page about specific integrations).

Overcoming “Consensus Bias”

LLMs are probabilistic engines trained to predict the next plausible word. By design, they tend toward the mean; they are “consensus machines.” When asked a general question, the AI will generate an answer that reflects the average of all the training data it has ingested.

For a brand to be cited, you must provide High Information Entropy—data, insights, or perspectives that are unique, counter-intuitive, or highly specific. If your content merely repeats the general advice found on a thousand other blogs, the AI has no incentive to cite you—it already “knows” that information. To earn a citation, you must be the sole source of a specific data point or expert opinion.

The Data Landscape: Why “Zero-Click” Is the New Normal

The shift to AI search is not theoretical; it is measurable. Data collected throughout 2024 and 2025 paints a picture of a digital ecosystem in flux.

The “Zero-Click” Acceleration

The primary economic impact of AI search is the reduction of organic traffic for informational queries. When the answer is provided directly on the Search Engine Results Page (SERP), the user often has no need to click further.

Quantitative analysis confirms that for informational queries triggering an AI Overview, organic Click-Through Rate (CTR) dropped from 1.76% to 0.61%—a decline of nearly 65%. The impact extends to paid search, with CTRs falling from 19.7% to 6.34% in the same dataset.

Quality Over Quantity: The “Referral Intent” Shift

However, the narrative of “traffic death” is nuanced. While volume is down, the quality of the remaining traffic is often higher. Recent studies suggest a filtration effect: “Low-intent” users are satisfied by the summary, while “high-intent” users—those ready to buy or deeply research.

This means we must transition our reporting from Volume metrics to Value metrics. A visitor who clicks through an AI citation has already read a summary and is actively seeking more depth. This traffic often converts at rates orders of magnitude higher than the “tire-kicker” traffic of the past.

The Trust Paradox

Despite the utility of AI summaries, consumers remain skeptical. This skepticism is a critical leverage point for brands. Surveys reveal that 53% of consumers distrust or lack confidence in AI-powered search results. Furthermore, 61% of users expressed a desire for a feature to toggle AI summaries off entirely.

Strategic Implication: This creates a “flight to authority.” When a user reads a generic AI summary they don’t fully trust, they scan the citations for a brand they recognize. If the AI cites a generic affiliate site, the user may ignore it. If the AI cites a known industry authority, the user is more likely to click. Brand Authority acts as the bridge over the trust gap. In a world of synthetic answers, the reputation of the human source becomes the primary signal of credibility.

Tip 1: Master the “Citations, Quotes, and Stats” Framework

If the goal is to be cited, we cannot simply write for humans and hope the machine keeps up. We must structure our content to be “machine-readable” while maintaining the quality human readers expect.

The “Citations, Quotes, and Stats” Rule

Landmark research empirically tested which on-page tactics most improved visibility in AI responses. The findings debunked many traditional SEO myths, revealing that AI models are biased toward rigor and authority.

Three specific tactics drove the highest increase in visibility:

- Cite Sources (+30-40% Impact): Adding citations to other authoritative sources (e.g., government data, research papers) signals rigorous research to the LLM. It tells the AI, “This content is grounded in fact.”

- Statistics Addition (+30-40% Impact): Replacing qualitative text (“many people”) with quantitative data (“53% of consumers”) increases “Information Density.” High-density chunks are more likely to be selected for synthesis.

- Quotation Addition (+30-40% Impact): Including quotes from known experts associates the content with the expert’s “Entity Node” in the Knowledge Graph.

Advisor Tip: Audit your “Thought Leadership” content. Are you making naked assertions, or are you backing them with data? To win the citation, you must act like a journalist, not just a blogger.

“Vibe Coding”: Conversational Resonance

As search engines shift to “AI Mode”—a conversational, interactive interface—the tone of your content becomes a ranking factor. This concept, sometimes called “Vibe Coding,” refers to optimizing the style of the writing to match the conversational intent of the user.

If a user asks a stressed query like “how to fix a drop in sales,” a stiff, corporate response will likely be deprioritized. The AI looks for content that matches the semantic “vibe” of the prompt.

- Natural Language: Use first-person plural (“We found that…”) and direct address (“You should consider…”).

- Empathetic Context: Acknowledge the why behind the query.

The “Inverted Pyramid” and Answer Optimization

LLMs process text linearly, but they heavily prioritize information found early in the document structure. They also prefer “definitive” answers that can be easily extracted. To win the citation, we must structure content using the Inverted Pyramid style of journalism.

The “First 50 Words” Rule: Every informational article must begin with a Direct Answer Block. If the target query is “How to calculate ROI,” the very first paragraph should be a 50-word definition and formula.

- Why: This provides the RAG system with a perfect, pre-formatted “chunk” to drop into the AI Overview.

- Implementation: Consider using a bolded introductory paragraph labeled “Key Takeaway” or “Quick Answer.”

Structuring for Scannability: AI models are excellent at parsing HTML structures. You can make their job easier (and your citation more likely) by using:

- Lists (<ol>, <ul>): Use these for any steps or features. AI agents often strip these lists directly for summaries.

- Tables (<table>): Comparative data must be in table tags. Tables are high-density information formats that AI models prioritize for “Comparison” queries (e.g., “Brand A vs Brand B”).

Tip 2: Solidify Technical Foundations & Entity Resolution

While content is the interface, technical infrastructure is the bedrock. If the AI cannot parse the “Entity Structure” of your site, your content remains invisible.

Mastering Schema.org

Structured data (Schema.org) has evolved from a “rich snippet” generator to the primary language of AI comprehension. It is how we disambiguate our entities for the machine.

Critical Schema Types for GEO:

- FAQPage: This is the most direct way to feed a conversational engine. By marking up Q&A sections with FAQ schema, you explicitly hand the AI a list of questions and answers it can use to satisfy user prompts.

- Organization: You must define your brand entity. This schema should include your logo, social profiles (sameAs), and contact info. This feeds the Knowledge Graph, ensuring the AI knows who you are.

- Author / TechArticle: Clearly identifying the author (linked to their Profile schema) establishes the E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness) signals necessary for the AI to trust the content.

Crawlability: The JavaScript Barrier

While Googlebot is adept at rendering JavaScript, the broader ecosystem of AI agents (OpenAI’s GPTBot, Anthropic’s ClaudeBot, Perplexity’s PerplexityBot) varies in sophistication.

The Rendering Risk: Content that requires a user interaction to load (e.g., “Click to read more,” accordions, tabs) or relies heavily on client-side JavaScript rendering is often invisible to these crawlers.

The Fix: Critical content—especially your “Direct Answers” and data tables—must be present in the Server-Side Rendered (SSR) HTML.

Robots.txt Strategy: You must make a strategic decision on which agents to allow. Blocking GPTBot might prevent your content from being used to train future models, but it also ensures you are excluded from real-time citations in ChatGPT Search. Given the traffic shift, the recommendation is to allow the major search agents (OpenAI, Google, Bing, Perplexity) while blocking aggressive, non-attributing scrapers.

Entity Resolution and Graph Management

The AI world is built on a Knowledge Graph—a database of entities (people, places, companies) and their connections. Optimization requires “Entity Resolution”—ensuring your brand entity is distinct, accurate, and authoritative in this graph.

- Consistency is Key: The AI validates facts by cross-referencing. If your pricing is listed as “$50” on your site but “$40” on a G2 review, the AI loses confidence and may exclude the data point. You must audit your NAP (Name, Address, Phone) and core attributes across the entire web (LinkedIn, Crunchbase, Review Sites) to ensure total alignment.

- The “SameAs” Triangulation: In your Organization schema, strictly use the sameAs property to link to your definitive third-party profiles (Wikipedia, Wikidata, Crunchbase). This tells the AI: “The entity on this website is the exact same entity as the one in your Knowledge Graph entry.”

Tip 3: Optimize the Product Knowledge Graph

For e-commerce brands, the user journey is shifting from “searching for a category” to “asking for a specific recommendation.” In this agentic web, your Product Feed—submitted to Merchant Center, ChatGPT, and others—is your most valuable SEO asset.

The Product Feed is the New SEO

In 2026, optimizing your feed isn’t just about Google Shopping ads; it’s about feeding the synthesis engine. OpenAI and other platforms have released specific requirements for feeds that differ from traditional SEO.

ChatGPT Shopping Specifications: According to 2025 developer documentation, AI shopping agents prioritize feeds that include “Performance Signals” and granular attributes.

- Popularity Score: ChatGPT’s feed specification now accepts a “popularity_score” (rated 0-5). This allows merchants to explicitly signal their best-sellers to the AI, directly influencing recommendation logic.

- Natural Language Titles: Traditional SEO titles like “Men’s Shoe – Black – Size 10” are insufficient. AI agents perform better with descriptive, natural language titles that match conversational queries.

- Old: “Men’s Shoe – Black – Size 10”

- GEO Optimized: “Men’s Non-Slip Black Leather Oxford Dress Shoe for Work – Size 10”

- Why: If a user asks, “I need a non-slip shoe for standing all day,” the AI looks for “non-slip” and “work” in the structured title to make the match.

Optimizing for Visual Search

With tools like Google Lens and Circle to Search becoming dominant, images are becoming queries. Data from late 2025 shows that Google Lens now processes 12 billion searches per month, a 4x increase since 2021.

To capture this traffic, your image metadata must be “descriptive,” not just “keyword-rich.”

- Descriptive Alt Text: Instead of just “floral dress,” use “Woman wearing floral sundress walking on a beach at sunset.” This helps the AI match the “vibe” or context of a user’s visual query (e.g., “Find me a dress for a beach wedding”).

- 3D Assets: Shoppers interact with 3D images 50% more than static ones. Google’s Shopping Graph now heavily prioritizes 3D assets (.glb files) in “rich” results for home goods and footwear.

Tip 4: Win the “Comparison” Battleground

A massive volume of high-intent queries are comparative (e.g., “Brand A vs. Brand B” or “Best CRM for small business”). If you do not own this conversation, the AI will synthesize an answer based on third-party aggregators or, worse, your competitors’ content.

The “Vs.” Strategy: Honesty as a Ranking Factor

The strategy here is counter-intuitive: You must create honest, data-backed comparison pages that genuinely acknowledge where a competitor might be strong.

The “Trust Score” Dynamic: AI models are trained to detect bias. A page that claims your product is perfect and the competitor is useless is flagged as “low-trust” marketing fluff. However, a page that says, “Brand B is an excellent choice for hobbyists due to its free tier, while our platform is built for enterprise scale,” mimics the balanced tone of a neutral observer. This increases the probability that the AI will cite your page as an objective source of truth.

The Power of HTML Tables

Structure is destiny. When an AI attempts to answer a “Best X vs. Y” query, it looks for structured data comparisons.

- The Tactic: Use clean HTML <table> tags to compare features, pricing, and integrations side-by-side.

- The Result: AI agents often “scrape” these tables directly to generate the comparison matrix shown in the answer. Pages with comparison tables are significantly more likely to be cited in “Best of” lists than those with dense paragraphs of text.

Tip 5: Leverage Reviews & UGC as “Freshness” Fuel

While technical schema and structured data provide the framework for AI visibility, User-Generated Content (UGC) provides the fuel. Large Language Models (LLMs) have a “freshness bias”—they prioritize data that reflects the current reality over static, outdated pages.

Reviews as Training Data

Static product descriptions rarely change. Reviews, however, provide a constant stream of live data, full of the semantic nuance that AI models use to train their understanding of a product. When a customer writes, “This running shoe has great arch support but runs a bit narrow,” they are creating a new data point that the AI indexes.

As Ben Salomon, an e-commerce expert, notes:

“In an era of deep skepticism, your existing customers have become your most effective and most trusted marketers. Reviews are no longer just social proof; they’re data for discoverability. When a shopper asks an AI, ‘What is the best shoe for narrow feet?’, the engine doesn’t look at your marketing copy—it looks at the consensus of your customers.”

Verified Data: The Conversion Impact

The value of reviews extends beyond just feeding the AI. They are the primary driver of conversion once the user lands on your site.

- Conversion Lift: Shoppers who see reviews and UGC convert 161% higher than those who don’t.

- The Power of Quantity: You don’t need thousands of reviews to see an impact. Reaching just 10 reviews on a product results in a 53% uplift in conversion.

Strategic Application: Smart Prompts

To maximize this, you cannot rely on generic review requests. You need “high-entropy” reviews—reviews that mention specific attributes (fit, material, use case).

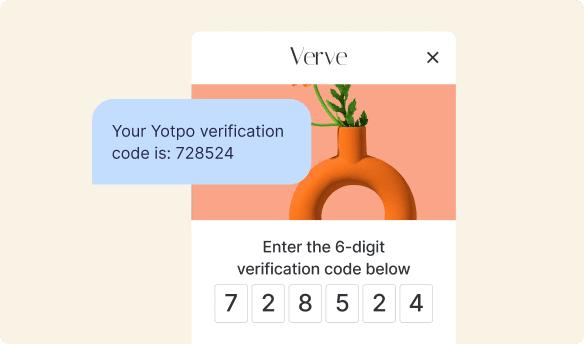

- The Tactic: Utilize AI-powered Smart Prompts in your review requests. These prompts ask tailored questions like “How was the fit?” or “Did the color match the photos?”

- The Result: Smart Prompts are 4x more likely to capture mentions of high-value topics, which in turn feed the long-tail queries AI users are asking.

Pro Tip: Speed matters. Collecting reviews via SMS review requests (powered by integrations with tools like Klaviyo or Attentive) sees a 66% higher conversion rate than email requests, ensuring your fresh data hits the feed faster.

Tip 6: Measure Success in the “Dark Traffic” Era

The era of precise, pixel-perfect attribution is fading. We are entering the age of “Dark Traffic” and “Correlated Influence,” where the path from search to purchase is obscured by the AI interface.

The Tracking Challenge

Traffic from platforms like ChatGPT, Claude, or Perplexity often arrives without clear referrer headers, appearing as “Direct” traffic in your analytics. Furthermore, Google Search Console lumps AI Overview impressions with standard web results, making it difficult to isolate the specific impact of AI visibility.

The Solution: Zero-Party Data & Citation Frequency

To navigate this, we must triangulate success using a mix of qualitative and quantitative signals.

- Zero-Party Data Attribution: The most reliable tracking tool in 2026 is simply asking the customer.

- Action: Add a “How did you hear about us?” field to your post-purchase survey or demo request form.

- Options: Include specific options for “ChatGPT / AI Search,” “Perplexity,” and “Google AI Overview.” Brands implementing this are already seeing significant attribution to these channels that traditional analytics miss.

- Tracking “Citation Frequency”: Move your KPIs from “Rank” to “Share of Voice.”

- New Metric: Citation Frequency. For a set of 50 core keywords, how often is your brand cited in the AI answer?

- Tools: Use emerging AI tracking tools (or manual monthly audits) to monitor this. If you are cited in 30% of answers for your category, that is your new “Rank 1”.

Tip 7: Prepare Infrastructure for Agentic Commerce

The shift we are witnessing in 2026 is merely the precursor to a more radical transformation: Agentic Commerce. By 2027, we anticipate that users will not just ask AI for information; they will ask AI to execute tasks.

- Current State: “What is the best CRM?”

- Future State: “Find the best CRM for my budget and sign me up for a trial.”

The “Machine-Readable” Mandate

To survive this shift, your website must evolve from a brochure for humans into an API for agents.

- Agent Adoption: The “Agentic AI” market in retail has already reached $46.7 billion in 2025, with 23% of Americans reporting they have made a purchase using an AI assistant in the past month.

- The Strategy: Ensure your core business terms—pricing, return policies, and shipping tiers—are structured in clear, machine-readable formats (like JSON-LD or standardized tables). If an autonomous agent cannot “read” your shipping cost in milliseconds, it will likely abandon the transaction in favor of a competitor whose terms are transparent to the bot.

Tip 8: Implement Defensive GEO (Protecting Branded Traffic)

While most GEO advice focuses on discovery, a critical new frontier is Defense. In late 2025, the number of “Navigational” queries (searches for specific brand names) triggering AI Overviews skyrocketed from under 1% to over 10%.

The Risk: Instead of clicking your homepage, a user searching for “Brand X Reviews” now sees an AI summary of your reputation. If that summary is based on outdated Reddit threads or negative press, you lose the customer before they ever visit your site.

The Defensive Playbook:

- Own the “About” Node: You must explicitly define who you are to the Knowledge Graph. Ensure your “About Us” page is robust, utilizing Organization schema to explicitly state your value proposition, founding date, and core services.

- Wiki-Strategy: AI models heavily weight Wikipedia and Wikidata as “ground truth.” If you qualify for a Wikipedia page, maintain it rigorously. If not, ensure your profiles on Crunchbase and Bloomberg are updated, as these are “Tier 1” sources for AI training.

Tip 9: Execute Digital PR 2.0 (Feeding the Training Data)

Traditional PR was about getting a link. Digital PR in the AI era is about getting a fact indexed.

The “Proprietary Data” Moat: AI models are hungry for unique data points they cannot hallucinate. A 2025 study found that brands publishing original, proprietary data (surveys, internal benchmarks) gain 45% more AI citations than those publishing generic advice.

Action Plan:

- Publish “State of the Industry” Reports: Once a quarter, release a report using your own internal data (anonymized).

- The “Stat Magnet” Tactic: When you release a press release, include a bulleted list of “Key Statistics” at the top. This structured format allows AI bots (like Perplexity) to easily extract your stats and cite you as the primary source when users ask questions like “What are the latest trends in [Industry]?”

Advanced Tactics: Multimodal & Paid AI

Optimizing for Multimodal & Video Synthesis

As of 2026, search has moved beyond text. With the release of fully conversational visual modes in Google Gemini and ChatGPT, the “input” for a search is just as likely to be a photo or a video clip as a typed query.

- The Tactic: Transcript Optimization. You must treat your video transcripts as high-value blog content. Ensure your scripts include clear definitions and “Direct Answer” blocks.

- Technical Requirement: Use VideoObject schema with the transcript and hasPart (Key Moments) properties. This signals to the AI exactly where the “answer chunks” are located within the video file.

- Multimodal “Vibe” Matching: Move beyond literal Alt Text. Use Contextual Alt Text that describes the scene and style (e.g., “Woman wearing cherry red stiletto heels at a formal evening gala”). This allows the multimodal AI to match the “formal evening” context of the user’s photo to your product.

The Frontier of Paid AI: “Sponsored Citations”

As organic “ten blue links” fade, the “Answer Layer” is becoming monetized. We are entering the era of Adver-Synthesis, where brands pay for “Sponsored Citations.”

- Perplexity’s “Sponsored Questions”: Brands can now sponsor follow-up questions (e.g., “What is the best enterprise plan for this software?”), steering the journey toward their value proposition.

- The Insight: You cannot buy a reputation the AI doesn’t believe you have. If your organic Knowledge Graph entity is weak, the AI may refuse to serve your “Sponsored Citation” for high-trust queries.

Bonus: Vertical-Specific GEO Strategies

One size does not fit all. AI models prioritize different data signals depending on the “stakes” of the query.

Fashion & Apparel: Context is King

- The GEO Tactic: Optimize for “Vibe Queries” (e.g., “outfits for a rustic winter wedding”).

- Implementation: Ensure your product descriptions and “Style Guide” blog posts explicitly use occasion-based keywords.

Beauty & Skincare: The Ingredient Knowledge Graph

- The GEO Tactic: Build an “Ingredient Glossary.” Create dedicated pages for key ingredients (e.g., “Hyaluronic Acid benefits”) linked to your products.

- Stat: 89% of B2B and high-consideration buyers consider AI search a top source for research.

Electronics & Tech: Structured Specs

- The GEO Tactic: “Spec Sheet” Schema. Ensure your technical specifications (battery life, compatibility) are in tabular format and structured Product Schema. AI agents love structured comparison data and will often lift your entire table into the answer.

The 30-Day GEO Implementation Checklist

Adapting to AI search can feel overwhelming. Use this step-by-step checklist to pivot your strategy.

Week 1: The Technical Foundation

- [ ] Audit Robots.txt: Ensure GPTBot, GoogleOther, and PerplexityBot are allowed to crawl your site.

- [ ] Schema Implementation: Add Organization schema to your homepage and FAQPage schema to your top 5 support pages.

- [ ] Product Feed Update: Update your Merchant Center feed to include natural language titles and popularity scores.

Week 2: Content Retrofit

- [ ] Inverted Pyramid Audit: Rewrite the introductions of your top 10 traffic-driving blog posts. Ensure they start with a direct “Answer Block” (first 50 words).

- [ ] Add Data Tables: Identify 3 “Comparison” or “Best of” articles and convert text comparisons into HTML <table> tags.

- [ ] Citation Check: Add at least 2 external citations to authoritative non-competitor sources in your top articles.

Week 3: The “Freshness” Boost

- [ ] Launch SMS Reviews: Activate an SMS review request flow (via integrations) to increase review velocity.

- [ ] Enable Smart Prompts: Update your review generation settings to ask specific questions (e.g., “How was the fit?”) to generate high-entropy content.

Week 4: Measurement & Entity Resolution

- [ ] Zero-Party Data: Add the “How did you hear about us?” field to your checkout flow.

- [ ] NAP Audit: Verify that your Name, Address, and Phone number are identical across your website, Google Business Profile, and major review sites.

How Yotpo Supports Your GEO Strategy

While GEO strategies get users to your site, the challenge is converting and retaining them. Yotpo’s platform is engineered to support this new ecosystem by providing the structured, high-velocity content that AI engines crave.

Yotpo Reviews leverages AI to organize unstructured customer feedback into clear “topics” (like Fit or Quality) via Reviews Atlas, making it easier for search AI to synthesize your product’s reputation. Simultaneously, Yotpo Loyalty ensures that the “high-intent” traffic you earn doesn’t bounce. With acquisition costs rising as organic volume dips, a 5% increase in retention can boost profits by 25% to 95%, turning your AI-driven visitors into lifetime advocates.

Conclusion

The transition to Generative Engine Optimization is a mandate for quality. The “tricks” of the past—keyword stuffing, link schemes, thin content—are liabilities in an AI world. The winning strategy for 2026 and beyond is to build a brand that is authoritative, data-rich, and machine-readable.

You must become the source of truth that the AI needs to cite to do its job. By focusing on unique data (Information Gain), structured delivery (Schema/Feeds), and verified reputation (Reviews/Digital PR), you secure your place not just on the search results page, but in the very mind of the machine.

Frequently Asked Questions

What is the difference between SEO and GEO?

SEO (Search Engine Optimization) focuses on ranking a list of links by targeting keywords. GEO (Generative Engine Optimization) focuses on optimizing content to be synthesized into a direct answer by AI. GEO prioritizes “Information Gain” (unique facts), citations, and structural clarity over keyword density.

How do AI Overviews impact organic traffic? Data from late 2025 shows a nuanced picture. While organic CTR for informational queries with AI Overviews has dropped by over 60%, the intent of the remaining traffic is higher. Furthermore, navigational AI Overviews (searches for your brand) have surged to 10.33% of queries, meaning the AI is summarizing your brand reputation before users even click your site.

Does schema markup help with ChatGPT?

Yes. While ChatGPT processes natural language, it relies on structured data (like Product and FAQPage schema) to accurately parse entities, pricing, and specifications without hallucinating.

Can I block AI bots from crawling my site?

Yes, via robots.txt, but it is generally not recommended for e-commerce brands. While 5.89% of sites now block GPTBot, doing so excludes you from real-time citations in the tools your customers use. With 23% of Americans already shopping via AI agents, blocking bots effectively removes your products from this growing shelf.

How does “Query Fan-Out” change my keyword strategy?

You can no longer just target “head terms.” You must create content that answers the specific sub-questions an AI might “fan out” to. For example, instead of just “Best Shoes,” create pages for “Best Shoes for Wide Feet,” “Best Shoes for Standing All Day,” and “Best Shoes for Nurses” to capture the specific sub-tasks the AI is trying to solve.

Is “Voice Search” optimization the same as GEO?

They are converging. With 50% of global searches now conducted via voice, the conversational tone required for GEO (“Vibe Coding”) perfectly matches voice intent. However, voice results load 52% faster than text results, making technical speed optimization (Core Web Vitals) even more critical for voice visibility.

Should I use AI to write my content?

You can use AI for outlines and drafts, but purely AI-generated content often lacks “Information Gain.” To win citations, you must add human expertise, proprietary stats, or unique opinions that the AI training data doesn’t already have. Brands publishing proprietary data see 45% more citations than those using generic content.

How do I optimize for “Multimodal” search (Text + Image)?

Ensure your images have descriptive filenames and alt text that describe the context (e.g., “Summer wedding guest dress”) rather than just the product SKU. This helps AI match your image to complex, multi-layered queries like “Find me a dress for a beach wedding.”

What is the most important schema for B2B SaaS?

FAQPage and TechArticle are critical. They allow you to feed direct answers to complex technical questions. Also, ensure your Organization schema is robust to establish your entity’s authority in the Knowledge Graph.

How can I track “Dark Traffic” from AI?

Implement “Zero-Party Data” collection. Add a field to your checkout or lead forms asking, “How did you hear about us?” with specific options for “ChatGPT/AI Search.” This catches the attribution that analytics software misses.

Does “Brand Sentiment” affect AI rankings?

Yes. AI models are trained on the open web, including Reddit and review sites. Consistently negative sentiment can teach the model to associate your brand with low quality, potentially reducing your visibility in “Best of” recommendations.

Can I pay to be in an AI Overview?

Currently, ad inventory is appearing within or above AI overviews (ads appear on 25% of AIO SERPs as of late 2025), but you cannot yet directly pay to be the “organic” cited source. That must be earned through authority.

How does “Video” content fit into GEO?

Video is increasingly being “watched” by AI agents to extract answers. Providing transcripts and using VideoObject schema with “Key Moments” allows the AI to “read” your video and cite specific clips as answers.

What is the biggest mistake brands make with GEO?

Ignoring the “Entity” layer. Brands often focus on content but fail to clean up their business data (NAP, Pricing) across the web. If the AI finds conflicting data about your price or features on different sites, it will lose confidence and stop citing you.

Join a free demo, personalized to fit your needs

Join a free demo, personalized to fit your needs